AI for LinkedIn: What to Automate, What to Never Outsource

1. Why full autopilot seduces—and betrays

Generative models collapse latency between blank canvas and persuasive paragraphs. That speed feels like productivity until you publish claims nobody verified, mis-anonymise a sensitive customer arc, or paste confident numbers your metrics never supported. LinkedIn multiplies harm because posts attract named comments, threads archive longer than product roadmaps, and prospects treat your feed as behavioural evidence about delivery reliability. Automation should reduce toil, not outsource accountability.

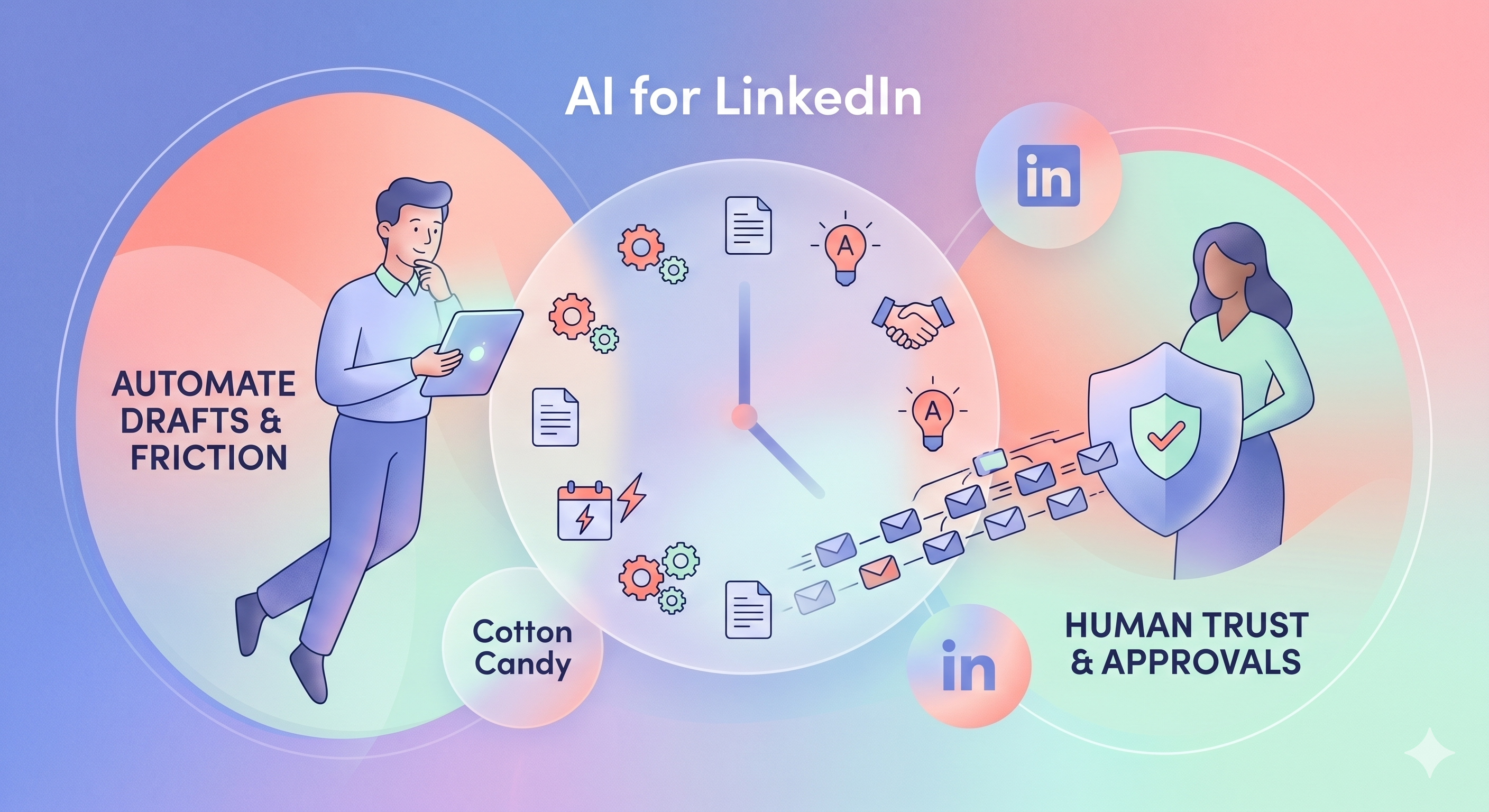

The useful frame is augmented judgement: models propose; humans decide what becomes public language defensible in front of angry procurement counsel next quarter. Treat anything else as treating your feed like an exhaust vent. That buyer-trust lens—what people infer from your feed before they ever book a call—anchors how prospects evaluate LinkedIn credibility.

2. Scaffolding you can accelerate—if review stays real

Outline and beat generation helps when leadership still chooses among angles intentionally—optionality, not automatic selection. Repurposing long sources into first-pass LinkedIn scaffolds pairs with teams already running capture workflows from notes and PDF material; machines summarise, humans fix quotes and delete sentences inventing meetings that never happened. Keep brand voice guidelines beside the compose box so assistants do not invent taboos. Hook variant labs that emit several first lines for the same proof point help when you test tension without prematurely locking emotional frames. Calendar skeletons that propose weekly themes reduce blank-calendar anxiety yet they do not replace strategic positioning—they buy schedule structure not strategic conviction. Pair those rhythms with scheduling realism across time zones; if you split feed experiments from serialized curriculum, align with newsletter versus feed posts.

Alt-text drafting for images helps accessibility when you confirm what the image actually displays—models sometimes hallucinate chart labels. Comment triage sorting that clusters questions for humans beats auto-replies that fake empathy publicly. Hashtag shortlists rooted in your historical vocabulary beat random tags; still audit each post for irrelevance. Locale-aware tone passes can flag idioms that read harsher in other regions; final cultural judgement remains local.

Meeting-note summarisation into private bullet lists you later curate accelerates capture without dumping confidential minutes verbatim into the feed. In every case, treat model output as draft debt you pay down before publish—not finished voice.

3. Governance patterns that keep velocity without hiding responsibility

A two-person rule for sensitive posts—draft by assistant or model, review by someone empowered to say no on legal or commercial grounds without career fear—prevents fear-driven approvals. Publishing gates must not vanish when scheduling tools tempt one-click broadcast; entity Pages often need legal review patterns described in profile versus Page routing. The same escalation applies before you automate polite messages—B2B DMs without spam matters as much as public posts here. Version fingerprints that record which model release and which brand-voice revision produced a draft help postmortems when something slips through.

What “agents” should mean here is workflow stitching capture → structuring → cadence reminders with explicit halts before publish—not endless chat scattering context. Contrast that with undifferentiated chat habits in Dynal vs ChatGPT: structure and approvals matter more than bragging about model size.

4. Judgement you should keep human—especially under stress

Client confidentiality and anonymised anecdotes fail first—models blend plausible details dangerously. Regulated claims spanning finance performance, health-adjacent analogies, or comparative legal outcomes need specialist review, not prompt-only adjudication. Partnership language implying formal collaborations requires partner marketing alignment before scheduling queues fire. Crisis and personnel topics need leadership judgment models rarely possess. Competitive attacks risk reciprocal reputation damage—humans should weigh category-level critique carefully instead of auto-generating personalised attacks. Forward-looking hiring or investment optics belong near finance and counsel, not near clever templates.

Synthetic engagement rings, purchased comment pods, and sock-puppet dialogues might look automatable—yet they violate integrity expectations expressed in LinkedIn’s Professional Community Policies and erode trust faster than any creative efficiency gain. Treat them as disqualifying even when vanity metrics briefly glow.

5. Measuring automation—and tying savings to acquisition reality

Metrics without worshipping impressions

When automation cuts time-to-first-draft while preserving approval quality, ROI shows up in reclaimed senior hours and shorter approval cycles—not necessarily raw impression spikes drifting with network effects. Track hours saved, rework after publish, qualitative sales notes referencing authored posts, and incident counts where drafts invented facts. Compare cohorts seasonally—do not blame models for summer troughs you would have seen anyway. Improve throughput and reduce rework; do not chase applause alone.

Pipeline signals

Connect draft-time savings to observable pipeline signals described in the acquisition playbook—did meetings referencing posts increase? Did discovery calls cite your language precisely? Did fewer mis-set expectations appear? If efficiency gains do not move those levers, reconsider where you spend automation calories.

6. Where Dynal fits—and procurement questions to answer early

Product mapping (verify live)

Dynal frames capture → draft → plan → review → publish with structured brand context—Brand DNA as maintainable guardrails rather than mystery prompt soup. Features evolve; verify scheduling fields, approval surfaces, and collaboration boundaries in-product rather than relying on stale screenshots. Marketing lenses: LinkedIn Content System versus LinkedIn AI Writer; compare against generic chat through Dynal vs ChatGPT; commercial reality on pricing.

Procurement and tool shortlists

Enterprise buyers increasingly probe how vendor AI features handle data retention, subprocessors, and deletion—especially when drafts touch customer names or roadmap details. Even if your blog stack is not procurement’s direct target, your public LinkedIn voice becomes evidence in diligence. Document which systems store prompts, who can view them, and retention windows. Silence invites worst-case assumptions—worse than imperfect yet honest answers aligned with your actual practices. When you are shortlisting tools, not only policies, use how to choose LinkedIn tools as a matrix-level companion.

7. Failure modes, red-teaming, pauses, and recognising machine-shaped prose

Patterns that keep repeating

Teams skip staging and discover invented statistics in customer calls before finance does. Junior operators gain publish rights without escalation paths and ship bland safe language contradicting the edgy brand voice marketing claimed. Multilingual automation without fluent review publishes confident mistranslations. Executives promise thought leadership programmes then starve review calendars, producing AI-shaped mush that sounds credible yet says nothing testable.

Red-teaming before high-stakes announcements

Run a simple adversarial ritual on sensitive posts: ask what a sceptical prospect, journalist, or activist could misread; ask which numbers require footnotes; ask whether competitive claims could trigger legal review. Models can help list attack lines; humans decide which lines matter politically. This complements tasteful hooks from hooks guidance without turning posts into legal memos—just enough paranoia to spare public embarrassment later.

When to pause automation voluntarily

Pause heavy model assistance during active crises where words feel weightier than usual—restructurings, geopolitical flashpoints touching your people, industry-wide security incidents. Humans think slower for good reason; automated fluency during those windows feels profane even when technically allowed. Likewise pause when model updates suddenly shift tone or citation behaviour—observe new failure modes before trusting old templates.

Training reviewers on machine defaults

Teams benefit from a lightweight style check: repeated abstract nouns, symmetrical parallelisms, and “in today’s fast-paced world” scaffolding often signal unedited model defaults. Train reviewers to mark those patterns not because models are evil—because unedited defaults read generic on LinkedIn where buyers hunt for specificity. Pair this with recurring study of strong posts in your types taxonomy so taste develops alongside tooling.

8. Roles, data hygiene, transparency, sandboxes, and edge cases

Stakeholders who stay in the loop

Legal touches regulated phrasing; HR touches people narratives; finance touches forward-looking claims about performance; customer success touches stories about customer outcomes—each should have a lightweight trigger defining when AI drafts must cross their desk. The goal is friction that prevents avoidable fires, not bureaucratic theatre that stops shipping entirely. Smaller teams stagger reviews by risk tier: low-risk industry observations may pass with marketing alone; client-specific stories escalate automatically.

Data handling habits

Avoid pasting sensitive customer lists, private metrics, or identifiable medical analogies into unmanaged chat sessions that lack appropriate controls. When in doubt, summarise manually at a higher level and let the model expand from the summary you already vetted. Align with what your security team accepts—this is mundane hygiene, yet most reputation blowups start with convenience trumping discipline.

Optional transparency norms

Some leaders disclose AI assistance in certain post categories; others keep disclosure minimal except where regulators require transparency. Decide organisationally—not ad hoc—to avoid clumsy contradiction where one executive advertises tooling and another hides it clumsily while sounding identical. Consistency preserves trust even when law stays silent—especially as audiences sharpen scepticism.

Sandboxed drafting versus production channels

Keep messy exploration in documents or approved internal tools; only move text into scheduling tools after it passes your checklist. The boundary sounds trivial until someone pastes a half-baked draft directly into a Page composer with auto-save and notification surfacing—then you fight retracting tone rather than improving ideas calmly. Sandboxing also lets you compare model versions side-by-side without polluting analytics on partial thoughts.

M&A chatter, insider-adjacent topics, and premature certainty

When markets speculate about your company or a customer’s future, AI drafts may confidently narrate futures nobody authorised. Default to silence or tightly lawyered language—models rarely respect information asymmetry psychology humans understand politically. The same applies to roadmap timing details that could move expectations unfairly for employees reading leadership posts—escalate before publishing clever paragraphs that age badly over a weekend.

Conclusion

Automate scaffolding that saves typing and organising time; refuse automation where fiduciary trust, confidentiality, regulatory clarity, or moral tone require human backbone everyone can see. Keep approvals legible, measure throughput and rework instead of vanity alone, and treat models as assistants that propose—not authorities that decree your professional reputation. Reconcile automation metrics periodically with qualitative buyer feedback so guardrails evolve calmly rather than swinging between techno-optimism and moral panic each quarter. Keep a dated internal memo summarising which workflows involve assistants, where humans wield veto power, and which categories of data never enter external tools—buyers occasionally ask plainly, and teammates deserve coherent answers. During turbulent weeks—reorgs, security incidents—downshift from richly generated drafts to outline-plus-human drafting; slower drafts often outperform clever paragraphs you must retract publicly.

---

Frequently asked questions

Should AI ever publish LinkedIn content without a human in the loop?

Almost never—reserve zero-touch experiments for internal sandboxes with no factual claims. Public voice tied to your name, your Page, or regulated industries still needs human approval, brand context, and liability thinking models cannot supply. Treat automation as acceleration for outlines, hook variants, repurposing scaffolds, alt-text drafts, and calendar skeletons—each still reviewed before ship.

How do we stop hallucinated numbers, protect authenticity, and integrate brand voice documentation?

Separate quantitative claims from narrative flourish; block metrics from publishing without data owners’ sign-off. Authenticity fails when unreviewed machine tone ships—reviewed drafts aligned to brand voice guidance read human because humans curate them. Keep exemplars and refusal lists where assistants look first; refresh policy quarterly or after model upgrades change behaviour materially.

Can AI assist DMs, scheduling, or newsletters without crossing ethical lines?

Draft DMs only after you earned context in public—never batch synthetic empathy; follow B2B DM ethics. Schedule with time zone discipline and honest reply coverage, not mythic “best hour” charts. Use models to outline newsletter chapters but let humans own promises across installments—see newsletter versus feed.

What about regulated sectors, engagement pods, and buying the wrong tool?

Layer compliance review; treat blog guidance as operational context only—escalate to counsel for binding rules in your jurisdiction. Engagement pods remain off-limits: manufactured engagement poisons trust. Tool mistakes usually mean buying features without approval workflow fit—see how to choose LinkedIn tools.

---

Ethics and compliance depend on jurisdiction and facts on the ground—involve counsel for binding rules; this article offers operational judgement, not legal advice. Teams spanning borders should map AI usage to regional employment and publicity norms affecting executive speech—not only conventional marketing regulations alone.